Table of Contents

According to research from MarketsAndMarkets, the global smart glasses market is projected to grow from $878.8 million in 2024 to over $4.12 billion by 2030. This annual growth rate of nearly 30% shows that smart glasses and AR applications are moving beyond being mere toys for geeks to becoming essential daily infrastructure.

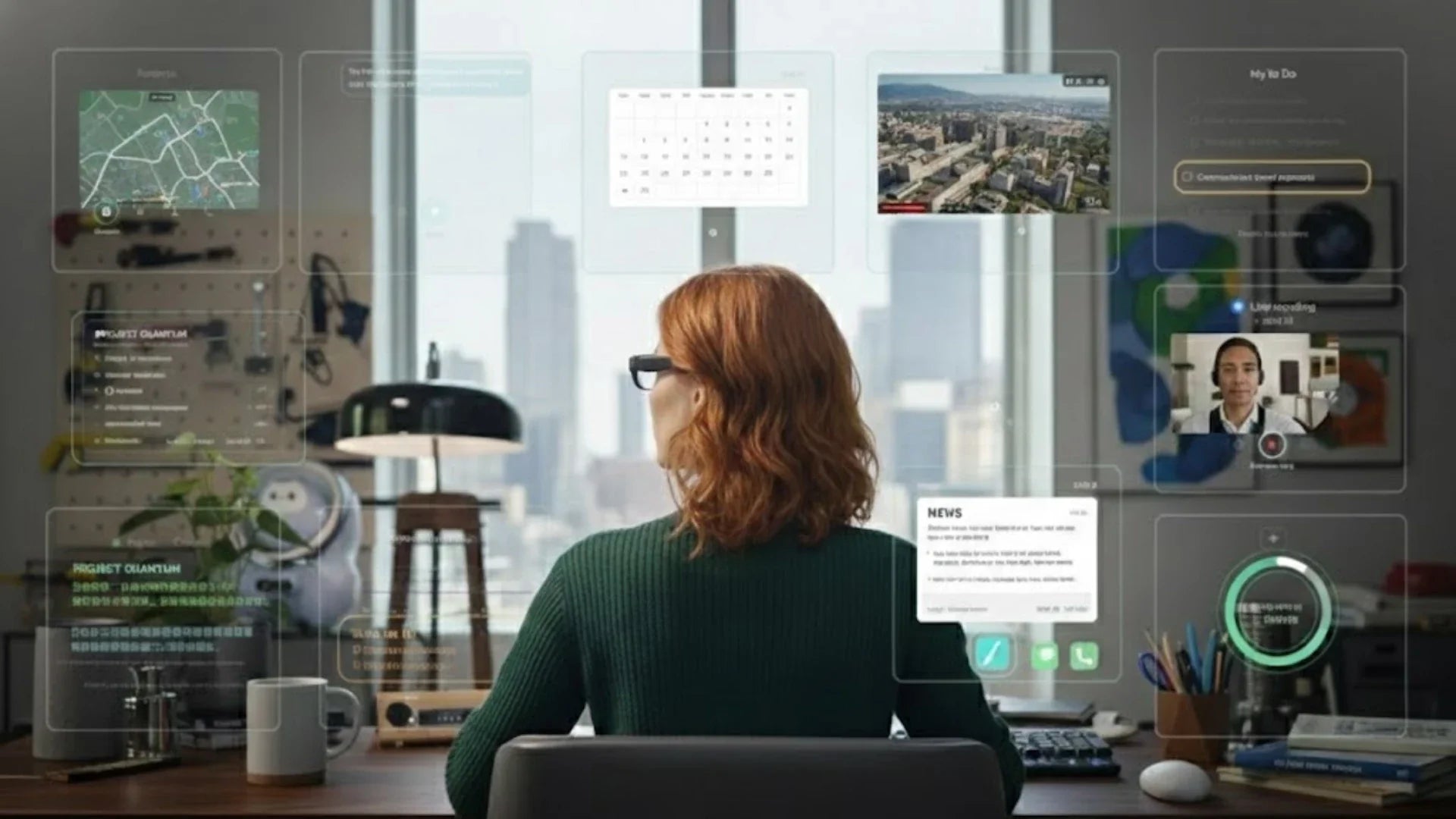

Smart glasses have evolved into productivity tools and immersive entertainment gateways that truly change daily work and life. With the fusion of AR and AI glasses, efficiency gains in areas like navigation, remote collaboration, medical training, and real-time translation can now be measured in concrete numbers. In this article, we will examine 10 typical augmented reality use cases to help you decide which are ready for mainstream use and which features are worth experiencing today.

What Are Smart Glasses and How Do They Use Augmented Reality?

Only by understanding the technical foundation can we clearly see the generational gaps in actual performance and determine which device fits our needs. Before exploring the top 10 use cases, we must clarify a few easily confused concepts—specifically the differences between smart glasses, AI glasses, and AR glasses—and the baseline hardware and software requirements for a quality pair of smart glasses in 2026.

The mainstream smart glasses we are seeing in front-line research can be roughly divided into three levels of capability.

The first level consists of basic display models. These are essentially head-mounted displays that use Micro OLED or MicroLED screens to project visuals from a phone or console directly before your eyes. They are ideal for gaming and movies. For example, RayNeo Air 4 Pro display solutions featuring 1080p resolution, a 120Hz refresh rate, HDR10 support, and a perceived brightness of approximately 1200 nits have become the standard for high-end, entertainment-focused AR glasses.

The second level includes AR glasses with spatial awareness and sensor fusion. Using IMUs, binocular cameras, and spatial algorithms, these devices recognize your head posture and the structure of your environment to accurately overlay virtual images onto the real world.

The third level is AI smart glasses, which is the category seeing the most intense discussion among real users on Reddit and YouTube over the past two years. These devices often feature independent operating systems and local AI capabilities. They support voice assistants, real-time translation, AI summarization, and semantic search. The core value of this category is no longer about what you are looking at, but rather what the device can automatically understand for you.

How Augmented Reality Enhances Real-World Vision

The core of augmented reality lies in overlaying a small amount of key information at the right time and location, in a way that is not intrusive. Through extensive user testing, we have found that visual strain increases significantly for most users when displayed content covers more than one-third of the field of view, especially while driving, exercising, or walking.

As a result, mature AR glasses prioritize the real world in their interface design, keeping virtual information subtle and positioned in peripheral areas. For example, navigation tools might only overlay directional arrows and distances at intersections. In remote maintenance, the glasses might only show screw numbers and torque specs on the surface of a machine. For medical training, they might only highlight key blood vessels, nerves, and operational paths. This visual hierarchy actually speeds up decision-making in the real world while reducing the fragmentation of attention.

At the same time, we have verified a consistent pattern among doctors, engineers, and athletes: when AR prompts have a latency below 50 milliseconds and a stable refresh rate of around 60Hz, the human eye naturally perceives virtual content as part of reality. This leads to smoother movements and actions. This is why the new generation of AR glasses has upgraded SoCs, sensors, and Wi-Fi modules to higher specifications, pushing end-to-end latency as low as possible.

Key Technologies Behind AR Smart Glasses: Sensors, AI, Spatial Computing

From a fundamental technical perspective, we can break down the key capabilities of AR smart glasses into three pillars: sensing, processing power, and spatial computing. The sensing layer typically includes a six-axis or nine-axis IMU, ambient light sensors, ToF or structured light depth sensors, and one or two forward-facing cameras. High-end models commonly use a dual-camera setup. This serves two purposes: high-resolution capture and improved spatial positioning accuracy through stereo parallax.

The upgrades in processing power have been remarkable over the past two years. The new Snapdragon AR platform, built on a 4nm process, offers a massive leap in energy efficiency and AI inference performance. It can handle gesture recognition, voice transcription, object identification, and basic scene understanding directly on the glasses without relying entirely on the cloud.

The spatial computing layer determines the quality of the AR experience. Products supporting 6DoF usually combine binocular cameras and IMU data for VIO calculations, supplemented by environment point cloud construction and plane detection. This allows virtual objects to remain stably attached to tables, walls, or device surfaces in the real world. During our front-line debugging, we focus heavily on drift during long-term wear. Generally, if a virtual marker stays within a 1cm offset while the head moves within a 5-meter range, users will perceive the experience as very stable.

Navigation and Real-Time Directions with Smart Glasses

Navigation is the AR use case that we found most likely to hook average users immediately, especially commuters, cyclists, and road-trippers. Once you get used to heading out with just your glasses—without pulling out your phone or looking down at a dashboard—traditional navigation feels incredibly clunky.

In city walking navigation, AR glasses can overlay arrows directly onto the intersection ahead, while showing distance and time estimates. If combined with POI data, they can also display store names and ratings above shopfronts, which cuts down on time spent searching. Our testing data from complex malls and airports shows that for long routes with frequent floor changes, the average time to find a destination drops by about 20% to 30%. Instances of getting lost or needing to double-check directions also decrease significantly.

For cyclists and motorcyclists, the real pain points are wind noise and the difficulty of using touchscreens with gloves. There is also the safety risk of constantly looking down at a screen at high speeds. High-brightness, high-contrast AR displays can show your route, speed, and upcoming turn prompts at the bottom of your field of vision without blocking the road ahead.

Remote Assistance and Field Service Support

Remote collaboration and maintenance support are among the primary drivers for enterprise AR glasses adoption. Many engineers and field technicians on Reddit complain that they used to solve problems by juggling phone calls, taking photos, and flipping through paper manuals. In that environment, fumbling and mistakes were almost guaranteed.

In a typical remote maintenance workflow, a field engineer wears AR glasses to stream a first-person view to a remote expert. The expert can circle items or add annotations on their screen, which are then overlaid back onto the engineer's field of vision. These markers point directly to specific screws, wiring terminals, or module locations. As long as the glasses feature at least 6DoF spatial tracking and dual cameras, combined with a stable Wi-Fi 6 or 5G connection, they can provide high-definition, near-real-time annotations and feedback.

Healthcare and Medical Training Applications

Healthcare and medical education are among the most demanding yet rewarding fields for AR applications. During surgery, surgeons must handle imaging data, patient records, and real-time feedback simultaneously. Any movement to look away at a screen can break their operational rhythm. AR glasses can overlay preoperative images, vital signs, and even future 3D path planning near the surgical site, allowing doctors to keep their eyes on the task.

In teaching scenarios for medical students and residents, we often see a single pair of glasses solve two problems: poor visibility and lack of clarity. First-person footage records every movement and hand detail of the lead surgeon. Later, spatial reconstruction and annotations can highlight key steps while overlaying anatomical structures and cautionary notes. Students no longer have to fight for a view in a crowded operating room. Instead, they can review the procedure repeatedly in a simulated environment.

Of course, the medical field has extremely strict requirements for stability, latency, and clarity. This is why most current applications focus on teaching, preoperative planning, and auxiliary info display rather than direct command execution. The most common feedback we hear from doctors is the need for high contrast, low latency, and simple interaction methods. For instance, using foot switches or fixed gestures to quickly swap info layers ensures no extra burden is placed on their hands.

Education and Immersive Learning Experiences

In the field of education, the value of AR glasses is primarily seen in two areas: reconstructing abstract concepts in 3D space and bringing the learning process closer to real-world applications. In traditional STEM teaching, many concepts remain highly abstract for students—such as electromagnetic fields, molecular structures, or complex engineering systems. It is often difficult to truly understand these through flat, 2D textbooks.

For example, when students wear AR glasses, they can view 1:1 scale 3D models directly in the classroom. They might see planetary orbits revolving around their seating area, molecular structures slowly rotating above their desks, or exploded views of complex machinery displayed in layers. In campus experiments, we observed that when learning content is presented in 3D, students' average error rates on conceptual questions drop significantly, and the frequency of their questions increases.

Retail and Virtual Shopping Experiences

Interest in AR glasses within retail and e-commerce is shifting from experimental marketing campaigns toward structural, long-term investment. The most common complaints we see from users during research are the inability to judge real size and texture when shopping online, and the excessive time spent comparing specs and reviews at shelves when shopping offline.

AR glasses can automatically identify products on a shelf as a user walks into a store, overlaying key parameters, price fluctuation curves, and user sentiment tags. This turns what used to be a manual search on a phone into an at-a-glance information layer. For bulky items like furniture or appliances, users can virtually place them in their homes ahead of time to verify if the size and style match, reducing the likelihood of returns.

When shopping online, AR glasses act more as a second screen. They can project a 3D model of a product onto your desk, allowing you to view it from all angles and compare its dimensions to real-world objects at a 1:1 scale. Furthermore, combined with AI recommendation systems, the device can automatically retrieve historical orders and preferences while you browse an item. It can remind you of your experience and feedback on similar models purchased previously, helping you avoid repeating past mistakes.

Gaming and Entertainment in the Real World

Gaming and entertainment were the first sectors to drive public attention toward AR glasses. However, over the past few years, user experience has been frequently criticized for points such as insufficient brightness, low refresh rates, nose bridge pressure during long-term wear, and a lack of content ecosystems. With upgrades in display and acoustic hardware, these pain points are being gradually resolved. In tests with high-frame-rate action games, players generally reported feeling less dizziness and fatigue when the glasses maintained a stable 120Hz refresh rate with low latency compared to traditional 60Hz devices.

Regarding content formats, we see two strengthening trends. The first is moving traditional large-screen games and movies onto portable 200-inch giant screens like the RayNeo Air 4 Pro, achieving a portable home theater experience through lightweight glasses. The second is the exploration of true AR gaming, such as overlaying virtual mission points in real streets or laying out virtual racetracks in a living room. When experiencing these games, we pay close attention to the balance between light shielding and transparency. We want to block ambient light for immersive content while maintaining awareness of the real environment, especially when pets or children are nearby.

Workplace Productivity and Collaboration

In knowledge work and corporate collaboration, the key value of AR glasses lies in expanding cognitive bandwidth. Many programmers, product managers, and designers sharing on Reddit express a consistent sentiment: while traditional multi-monitor setups are efficient, they require a fixed workstation, yet the proportion of remote and mobile work continues to rise.

Through AI+AR glasses like the RayNeo X3 Pro, we can instantly summon multiple virtual screens anywhere. You can place a code window directly in front of you while pinning documents and chat windows to the walls on either side, creating an office-like environment in a cafe or hotel room. As long as rendering latency remains low and text clarity meets visual thresholds, the productivity efficiency of this virtual multi-screen experience can approach or even exceed a traditional triple-monitor desktop.

For meetings, AR glasses can display meeting minutes, key metrics, and real-time automated subtitles at the edge of your vision, eliminating the need to frequently switch apps or split screens. When AI automatically generates summaries and to-do lists—pinning them as spatial tags in your workspace after the meeting—the probability of forgetting important tasks drops significantly.

Translation and Real-Time Subtitles

Real-time translation and voice-to-subtitle features have been among the most discussed and frequently used functions over the past two years. User feedback in communities often mentions that traditional mobile translation apps cause awkward pauses during face-to-face conversations, requiring the phone to be passed back and forth. The advantage of smart glasses is that translation is layered directly onto the real conversation.

When a device possesses scene-understanding capabilities, it can adjust translation styles and terminology based on the current location, app context, and user history. For example, it can prioritize keeping original professional terms during technical meetings or output more polite and idiomatic expressions while traveling. Feedback from users during international trips and conferences points to the same conclusion: once translation lives inside the glasses, the psychological barrier to cross-language communication drops sharply, and people are more willing to speak.

Fitness, Sports, and Performance Tracking

In fitness and sports, the most direct benefit of wearing smart glasses is obtaining real-time feedback without breaking focus or form. Runners and cyclists often complain that watch screens are too small and phones are inconvenient to check, leading them to either give up on data tracking or frequently interrupt their rhythm.

AR glasses can continuously display key metrics like heart rate, pace, cadence, and power at the edge of your vision. When a metric deviates from the target range, color changes or subtle animations prompt you to adjust your pace. For interval training, the system can use prominent color blocks to signal high-intensity or recovery phases without requiring any button presses. For indoor strength training and ball sports, when glasses work with cameras and AI models to recognize posture, they can immediately point out angle deviations or center-of-gravity issues after a set.

Professional athletes and coaches place even higher value on post-training analysis. By overlaying spatial trajectories, speed curves, and physical data, they can more accurately determine if technical improvements have led to actual performance gains. In our tests with coaches, using AR to replay training sessions with overlaid key metrics often makes it easier for athletes to understand their mistakes than traditional video playback, as data and visuals are presented simultaneously in space.

Smart Homes and IoT Control

The spread of smart homes and IoT devices has increased the number of control points in the house, but traditional mobile app control has led to noticeable interface fatigue. Many users report that different brands require multiple apps, making switching cumbersome and loading slow.

AR glasses provide a more intuitive way to interact. When you walk into the living room, a virtual control panel automatically overlays in your view, corresponding to the TV, lights, AC, and speakers. You can adjust brightness, temperature, and volume with just a gaze or a simple gesture. For homes supporting spatial audio or multi-room setups, you can see the volume distribution across the room layout and complete settings as easily as dragging an icon.

In more advanced scenarios, when enough sensors and automation rules are deployed, AR glasses can serve as a home status HUD. They can remind you which window is open or which power outlet is still on before you leave, or overlay expiration dates and recipe suggestions when you open the fridge. In real home testing, we found that as long as the UI design remains restrained and avoids cluttering the vision, users quickly form new habits and gradually reduce their dependence on phones.

Future Trends in Augmented Reality and Smart Glasses

From an industry and technical standpoint, the key trends for the coming years are focusing on three directions: AI-driven contextual understanding, further lightweight and aesthetic industrial design, and a continuous shift in application from professional users to everyday consumers. Furthermore, more users are no longer viewing smart glasses as a niche tech gadget. Instead, they see them as the next generation of personal screens or a second brain interface. They care more about battery life, comfort, the content ecosystem, and synergy with existing devices rather than pushing the limits of a single spec. As the ecosystem matures, we expect everyday applications to evolve from specific niche needs to a foundational layer of daily life. Navigation, translation, remote collaboration, and entertainment will become core capabilities, while other vertical applications will be installed as needed, much like apps on a smartphone.

Conclusion

We often assume we will resist new technology until the day it makes life simpler. AR is currently on that path, and these 10 Augmented Reality Applications are just the beginning of how it will change our lives. It might knock on your door sooner than you think.

Share:

7 Best Ways to Learn a New Language Faster in 2026 (Proven Methods)

Gaming Glasses: Which Type Is Right for You?